Abstract

Knowledge-Based Visual Question Answering (KB-VQA) requires models to answer questions about an image by integrating external knowledge, posing significant challenges due to noisy retrieval and the structured, encyclopedic nature of the knowledge base. These characteristics create a distributional gap from pretrained multimodal large language models (MLLMs), making effective reasoning and domain adaptation difficult in the post-training stage. We propose Wiki-R1, a data-generation-based curriculum reinforcement learning framework that systematically incentivizes reasoning in MLLMs for KB-VQA. Wiki-R1 constructs a sequence of training distributions aligned with the model’s evolving capability, bridging the gap from pretraining to the KB-VQA target distribution. We introduce controllable curriculum data generation and a curriculum sampling strategy that selects informative samples likely to yield non-zero advantages during RL updates. Experiments on Encyclopedic VQA and InfoSeek demonstrate state-of-the-art results, improving accuracy from 35.5% to 37.1% on Encyclopedic VQA and from 40.1% to 44.1% on InfoSeek.

Motivation

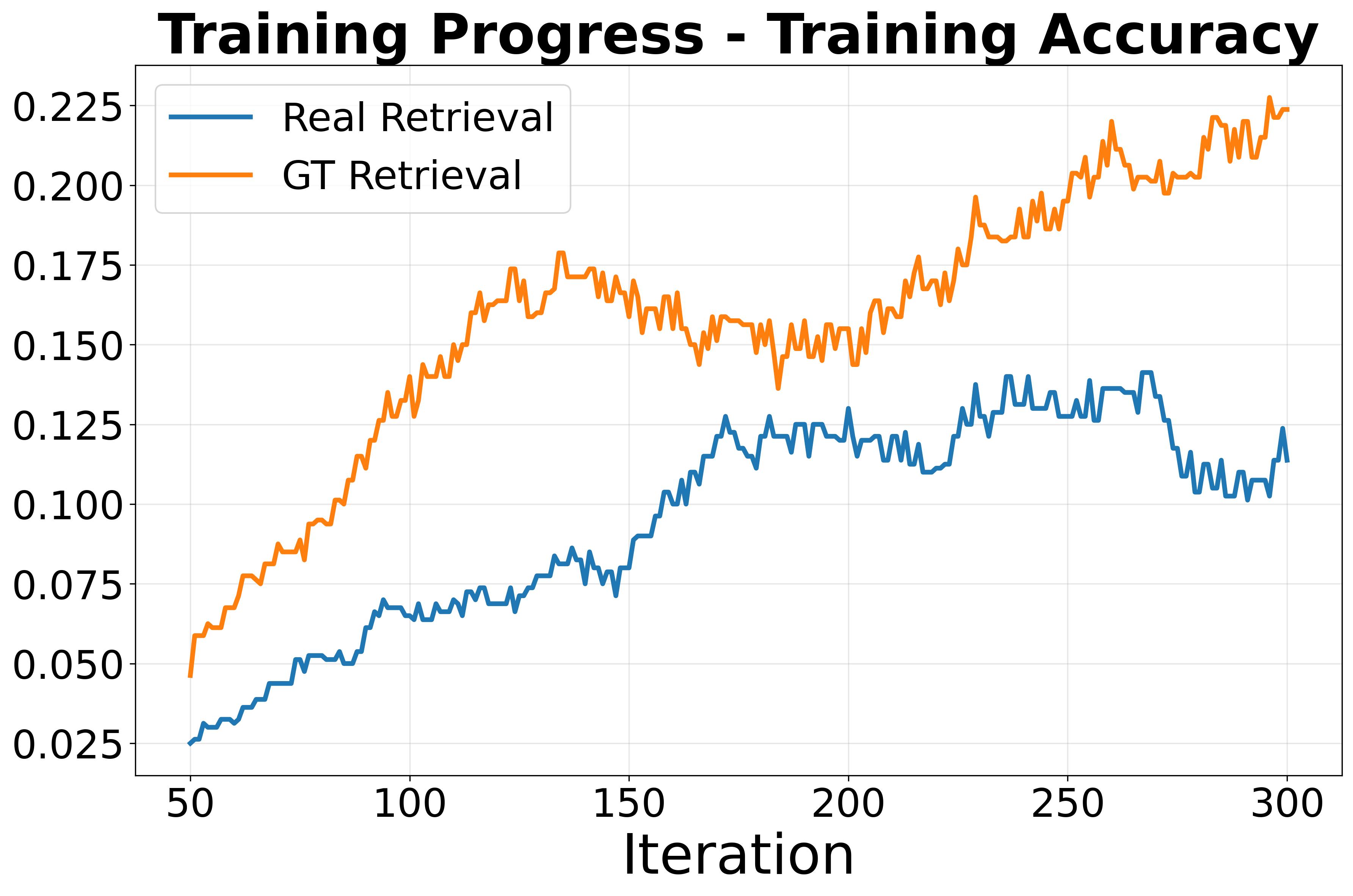

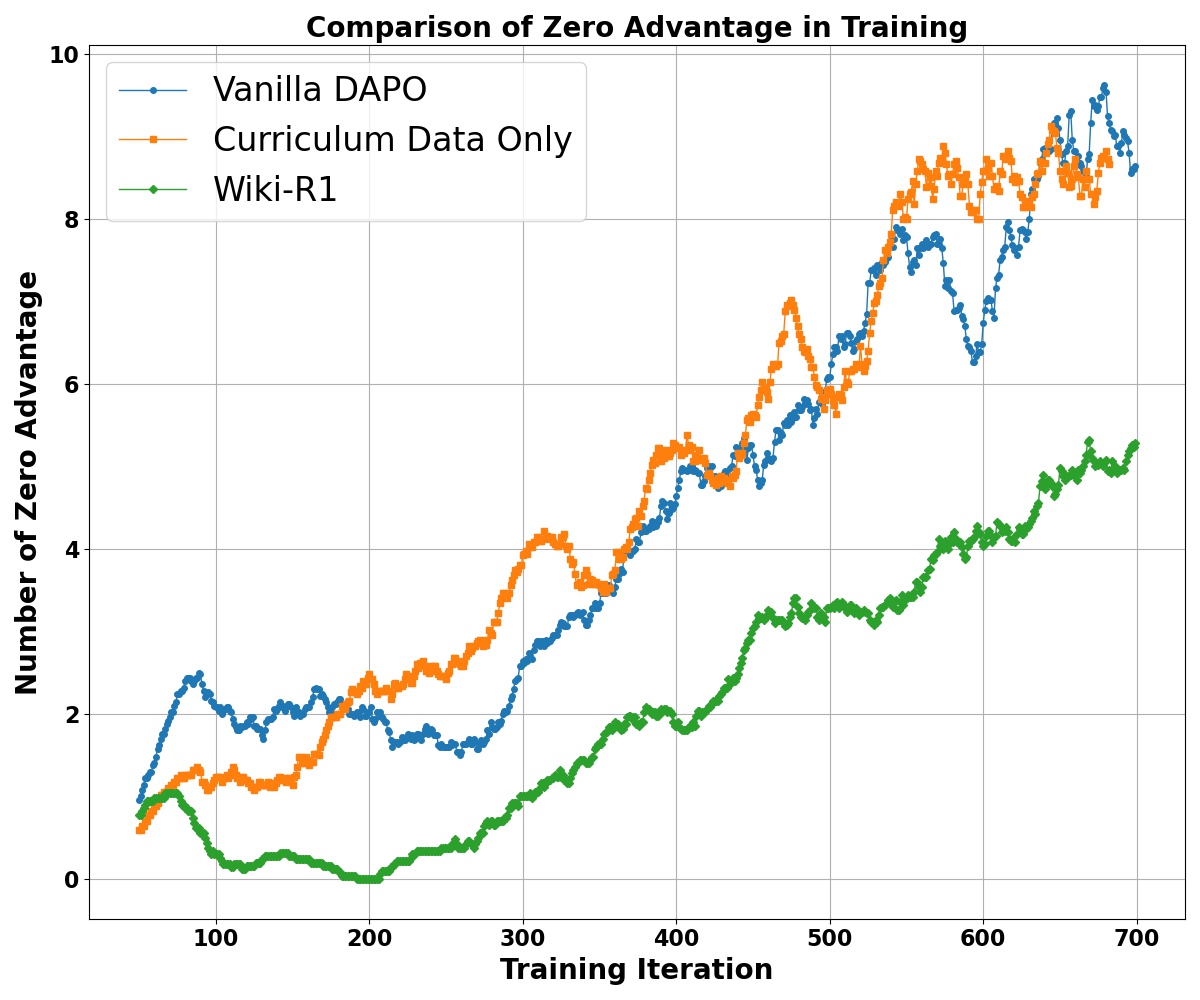

Training accuracy remains low under vanilla RL on KB-VQA, highlighting the distribution gap.

A high proportion of zero-advantage trajectories causes sparse learning signals.

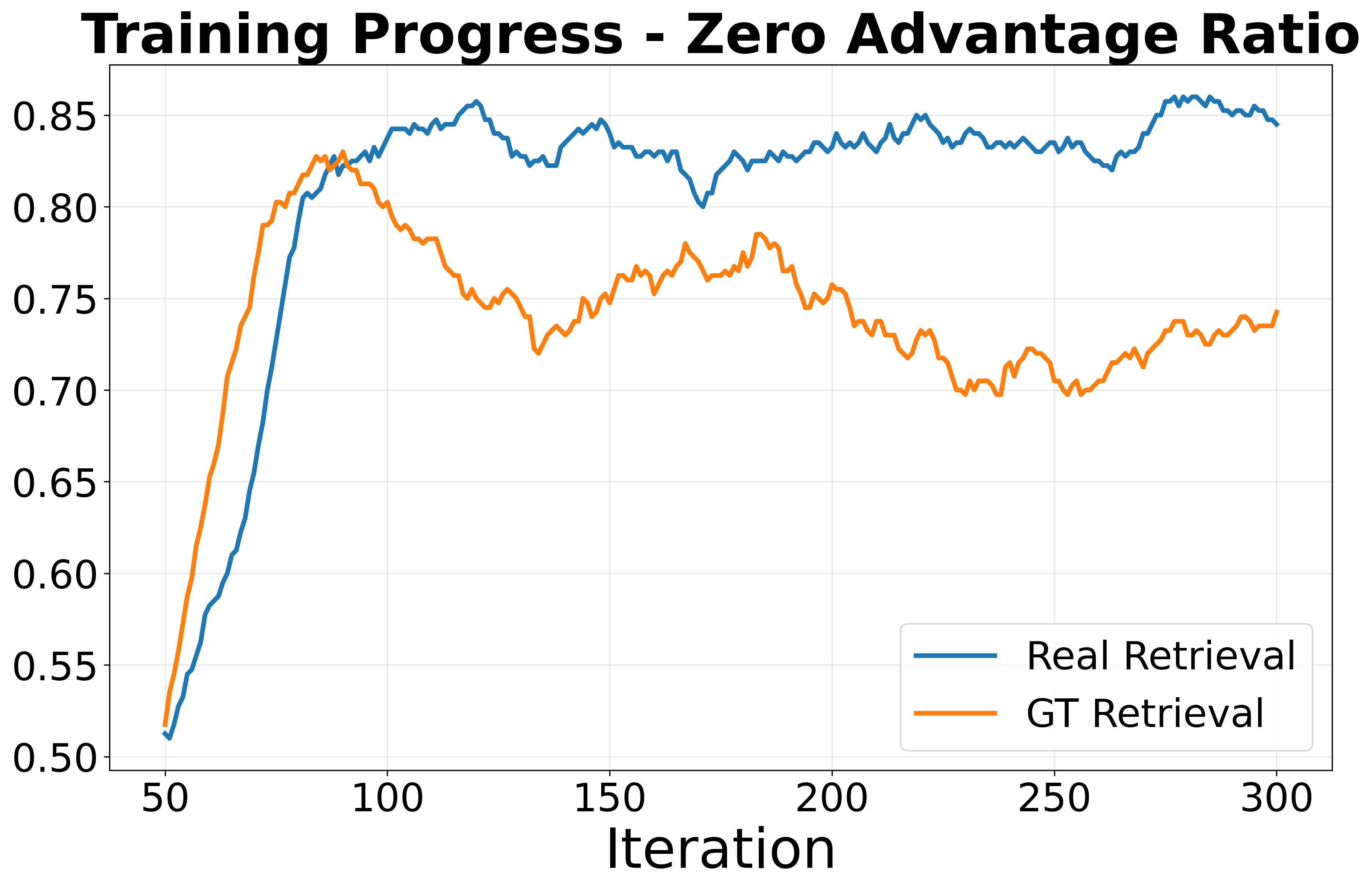

Wiki-R1 narrows the gap by constructing a curriculum of training distributions.

RL for KB-VQA faces severe sparse rewards due to noisy retrieval and the encyclopedic structure of the knowledge base. Wiki-R1 addresses this by creating a learnable curriculum over data difficulty and sampling informative examples that yield effective gradients.

Method

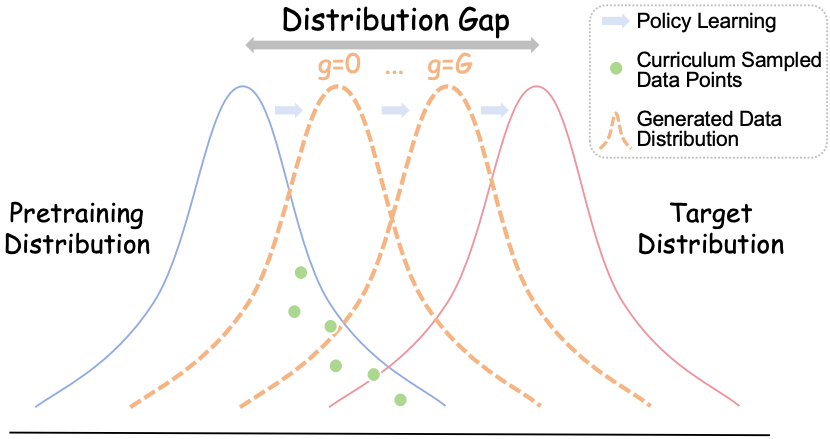

Wiki-R1 pipeline. Left: controllable curriculum data generation bridges pretraining and KB-VQA distributions. Right: curriculum sampling with observation propagation selects informative samples with non-zero advantages.

Wiki-R1 manipulates the retriever to generate training data with controllable difficulty, then schedules samples using observed rewards and label propagation across related knowledge-base articles. This joint design stabilizes RL optimization under noisy retrieval.

Experiments

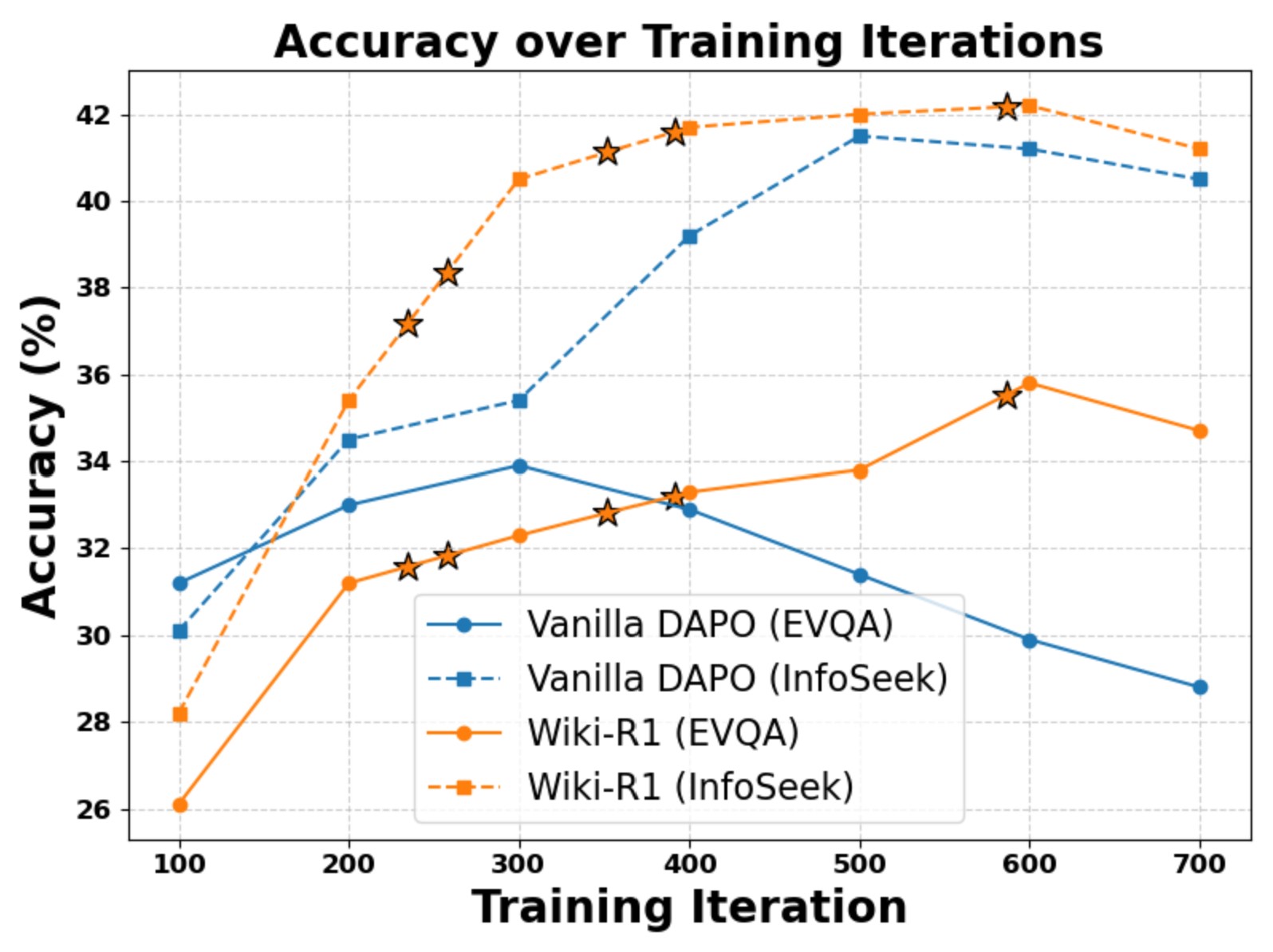

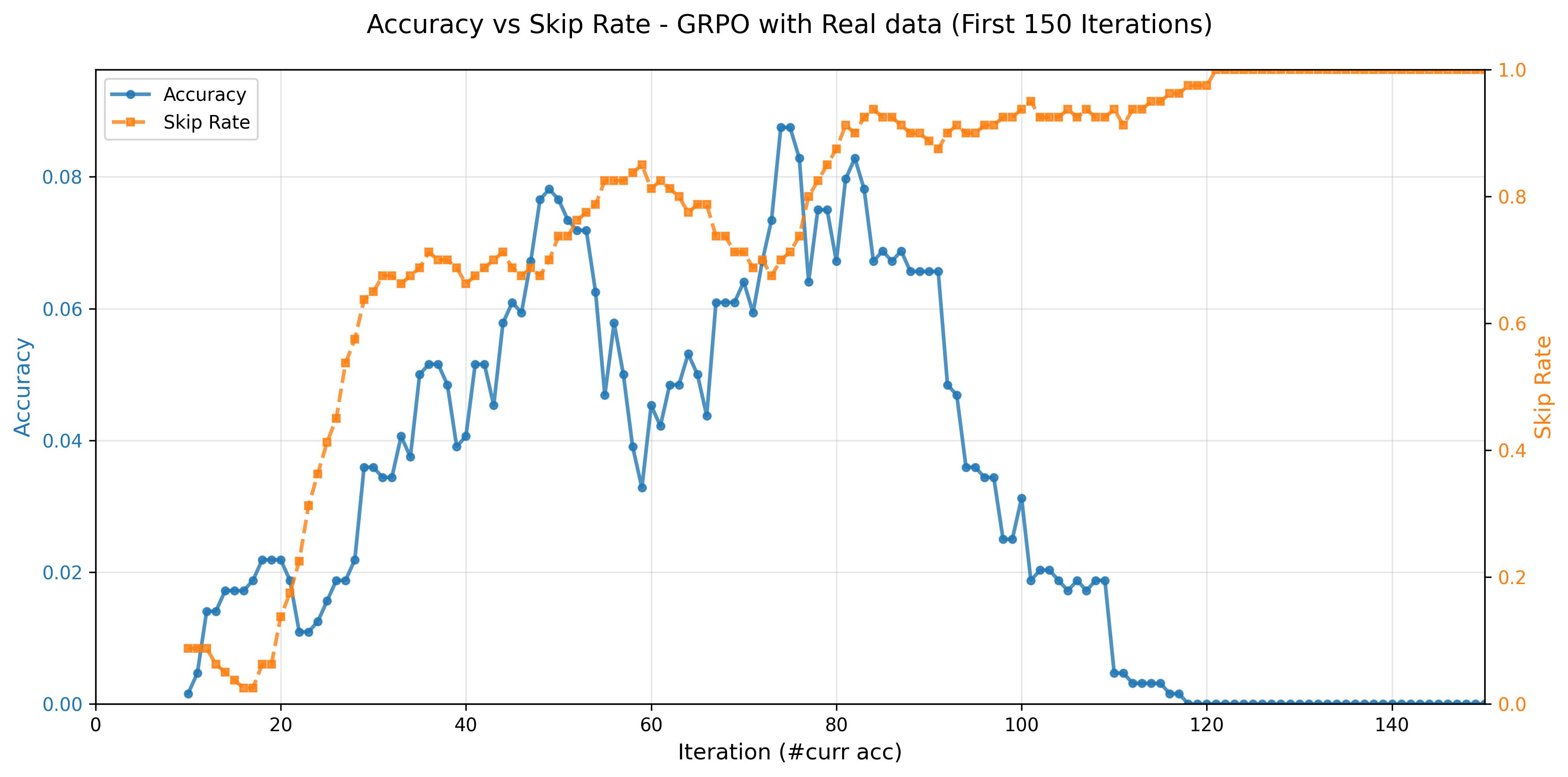

Observation propagation reduces ignored trajectories and improves training efficiency.

Wiki-R1 achieves stable improvements across EVQA and InfoSeek with curriculum progression.

Better learning signals correlate with higher accuracy and lower skip rates.

On Encyclopedic VQA and InfoSeek, Wiki-R1 reaches 37.1% and 44.1% accuracy, respectively, and achieves 47.8% on InfoSeek’s Unseen-Question split. It also generalizes well to ViQuAE in zero-shot transfer.

Visualization

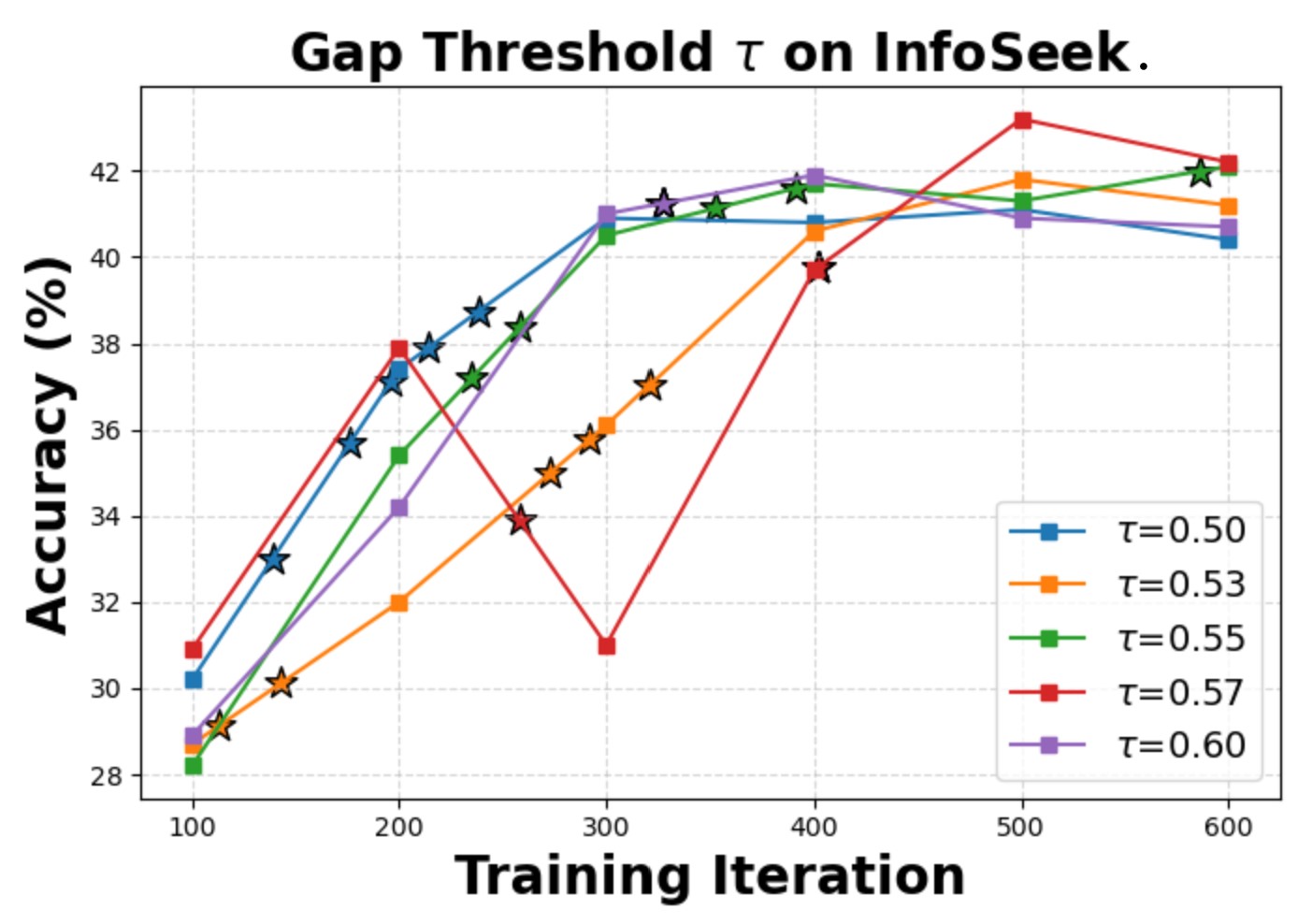

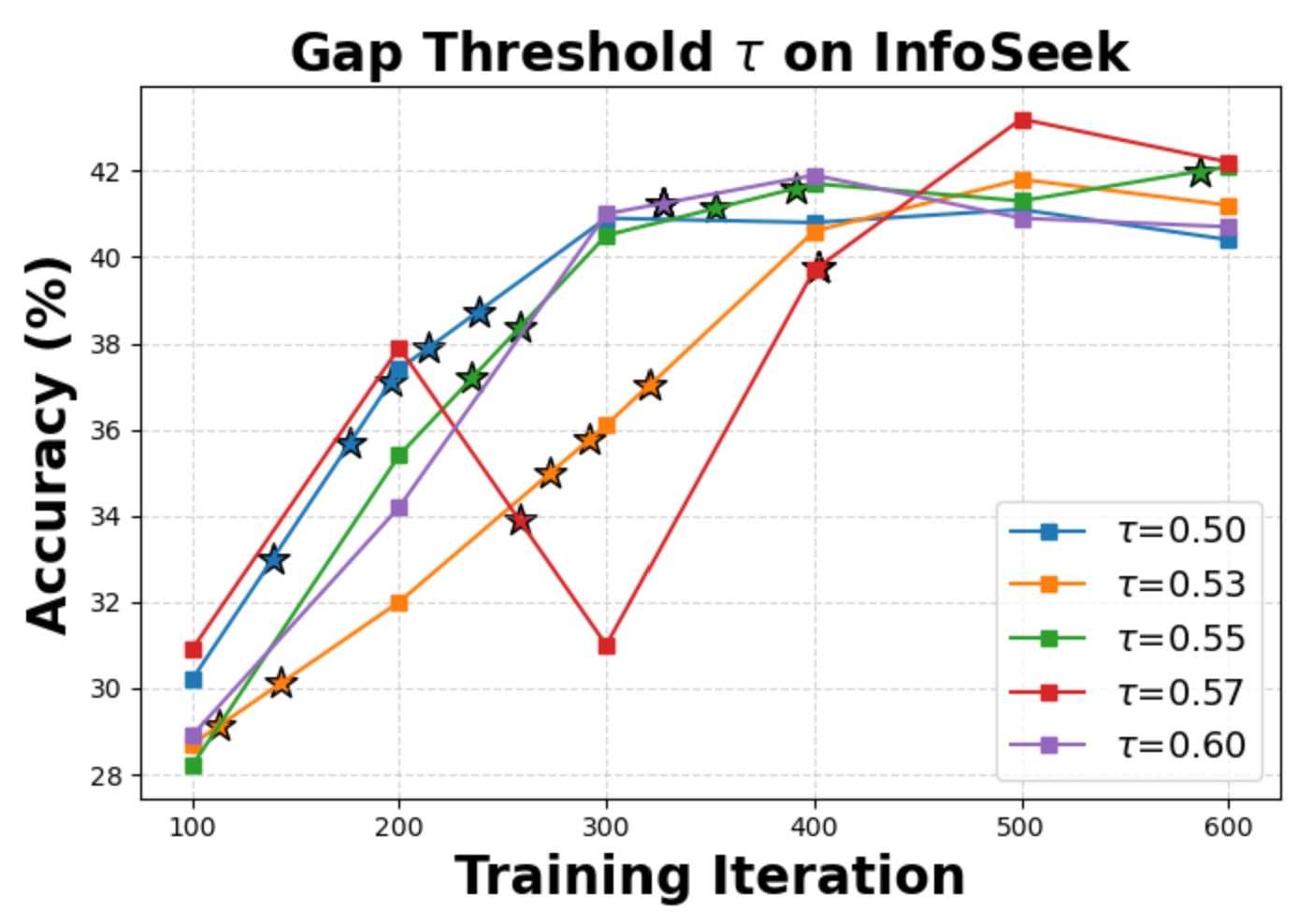

Sensitivity analysis of curriculum parameters.

Robustness trends across gap levels.

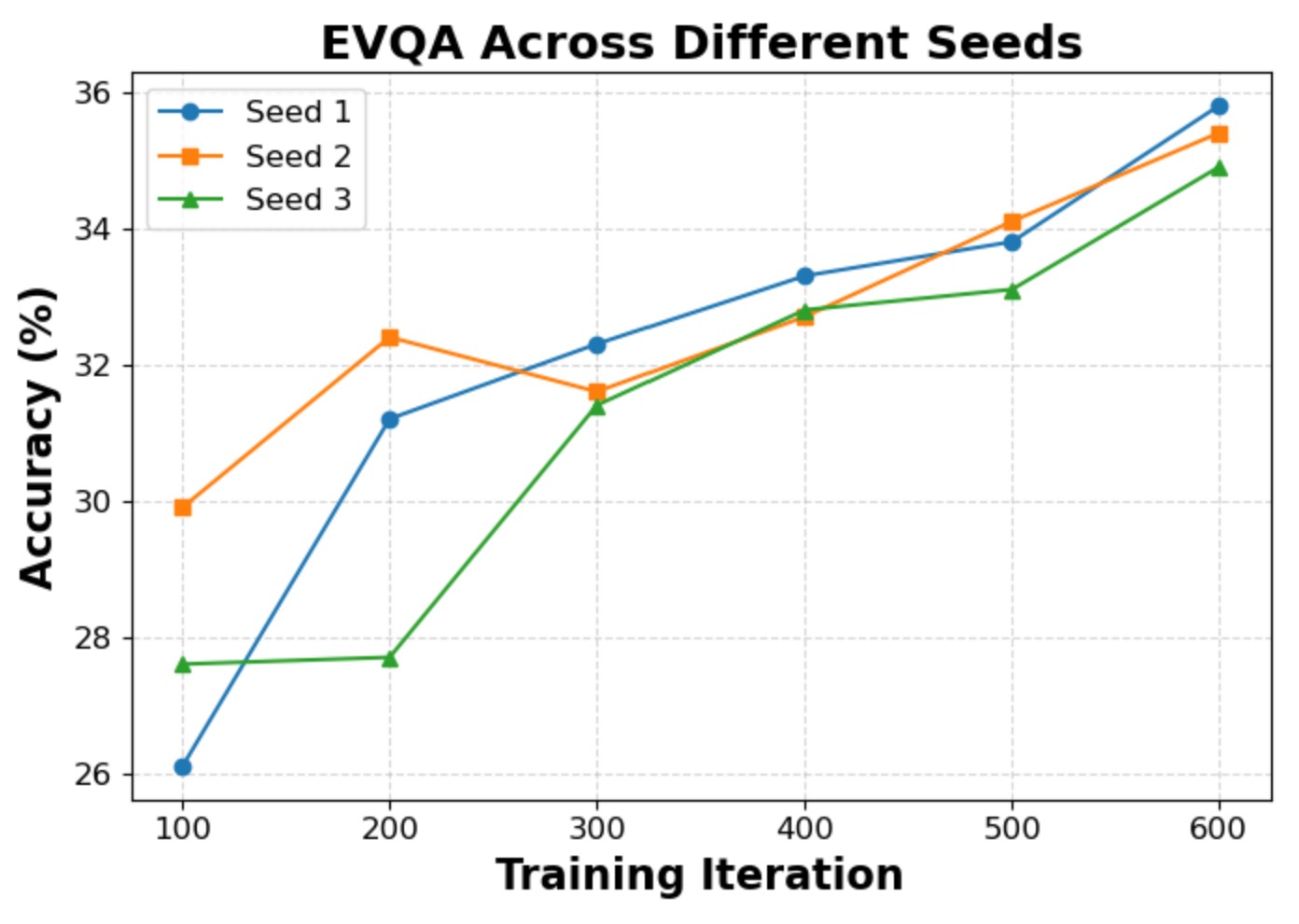

Stability across random seeds (setting A).

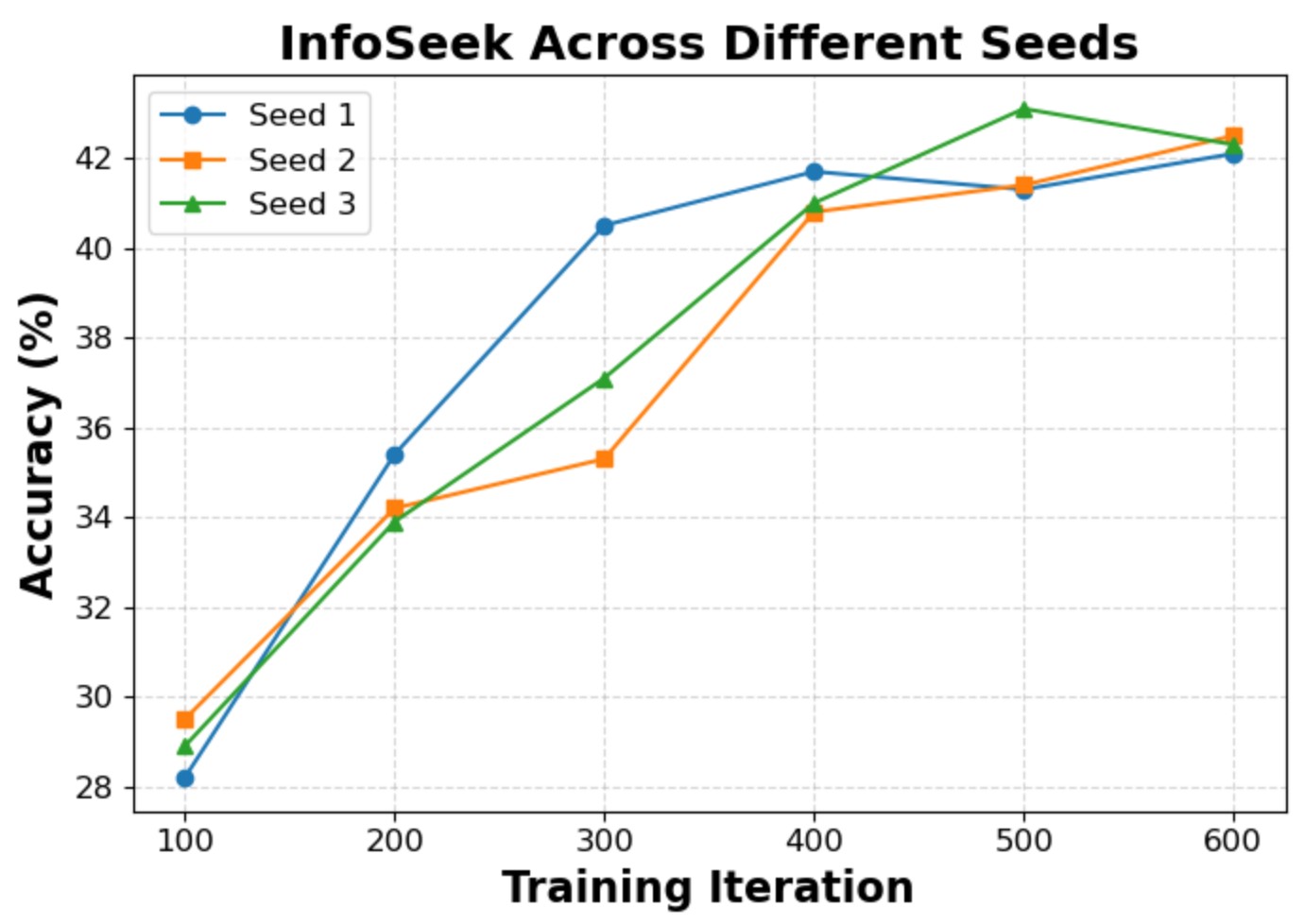

Stability across random seeds (setting B).

BibTeX

@inproceedings{ningwiki,

title={Wiki-R1: Incentivizing Multimodal Reasoning for Knowledge-based VQA via Data and Sampling Curriculum},

author={Ning, Shan and Qiu, Longtian and He, Xuming},

booktitle={The Fourteenth International Conference on Learning Representations}

}